[ad_1]

As we proceed to combine generative AI into our every day lives, it’s essential to grasp the potential harms that may come up from its use. Our ongoing dedication to advance secure, safe, and reliable AI contains transparency in regards to the capabilities and limitations of enormous language fashions (LLMs). We prioritize analysis on societal dangers and constructing safe, secure AI, and concentrate on creating and deploying AI methods for the general public good. You possibly can learn extra about Microsoft’s method to securing generative AI with new instruments we lately introduced as out there or coming quickly to Microsoft Azure AI Studio for generative AI app builders.

We additionally made a dedication to determine and mitigate dangers and share data on novel, potential threats. For instance, earlier this yr Microsoft shared the ideas shaping Microsoft’s coverage and actions blocking the nation-state superior persistent threats (APTs), superior persistent manipulators (APMs), and cybercriminal syndicates we monitor from utilizing our AI instruments and APIs.

On this weblog publish, we are going to focus on a number of the key points surrounding AI harms and vulnerabilities, and the steps we’re taking to handle the chance.

The potential for malicious manipulation of LLMs

One of many fundamental considerations with AI is its potential misuse for malicious functions. To forestall this, AI methods at Microsoft are constructed with a number of layers of defenses all through their structure. One function of those defenses is to restrict what the LLM will do, to align with the builders’ human values and targets. However typically dangerous actors try and bypass these safeguards with the intent to attain unauthorized actions, which can lead to what is called a “jailbreak.” The implications can vary from the unapproved however much less dangerous—like getting the AI interface to speak like a pirate—to the very critical, equivalent to inducing AI to supply detailed directions on methods to obtain unlawful actions. In consequence, a great deal of effort goes into shoring up these jailbreak defenses to guard AI-integrated purposes from these behaviors.

Whereas AI-integrated purposes may be attacked like conventional software program (with strategies like buffer overflows and cross-site scripting), they can be susceptible to extra specialised assaults that exploit their distinctive traits, together with the manipulation or injection of malicious directions by speaking to the AI mannequin by means of the consumer immediate. We are able to break these dangers into two teams of assault strategies:

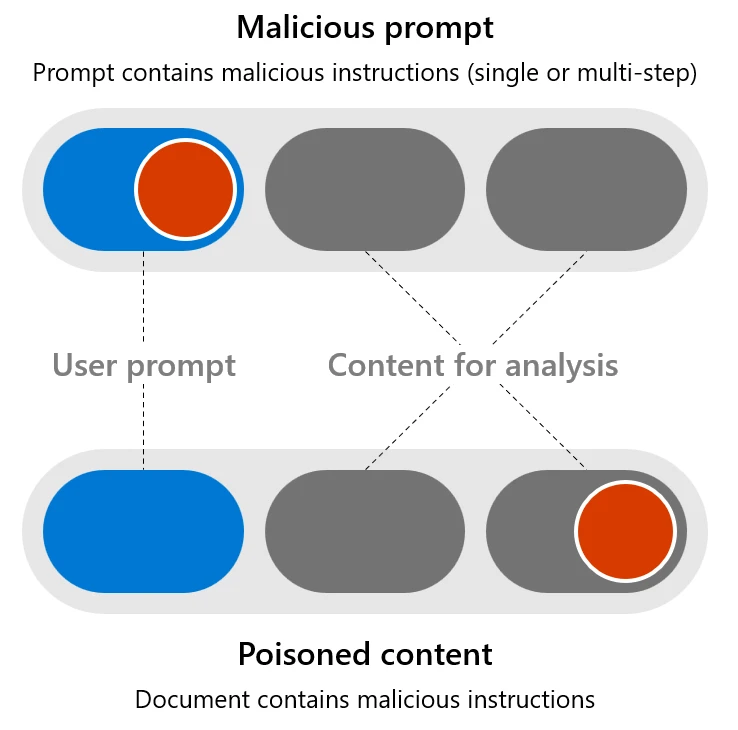

- Malicious prompts: When the consumer enter makes an attempt to bypass security methods to be able to obtain a harmful objective. Additionally known as consumer/direct immediate injection assault, or UPIA.

- Poisoned content material: When a well-intentioned consumer asks the AI system to course of a seemingly innocent doc (equivalent to summarizing an electronic mail) that incorporates content material created by a malicious third social gathering with the aim of exploiting a flaw within the AI system. Often known as cross/oblique immediate injection assault, or XPIA.

At the moment we’ll share two of our staff’s advances on this discipline: the invention of a strong approach to neutralize poisoned content material, and the invention of a novel household of malicious immediate assaults, and methods to defend in opposition to them with a number of layers of mitigations.

Neutralizing poisoned content material (Spotlighting)

Immediate injection assaults by means of poisoned content material are a serious safety danger as a result of an attacker who does this could doubtlessly difficulty instructions to the AI system as in the event that they have been the consumer. For instance, a malicious electronic mail might comprise a payload that, when summarized, would trigger the system to go looking the consumer’s electronic mail (utilizing the consumer’s credentials) for different emails with delicate topics—say, “Password Reset”—and exfiltrate the contents of these emails to the attacker by fetching a picture from an attacker-controlled URL. As such capabilities are of apparent curiosity to a variety of adversaries, defending in opposition to them is a key requirement for the secure and safe operation of any AI service.

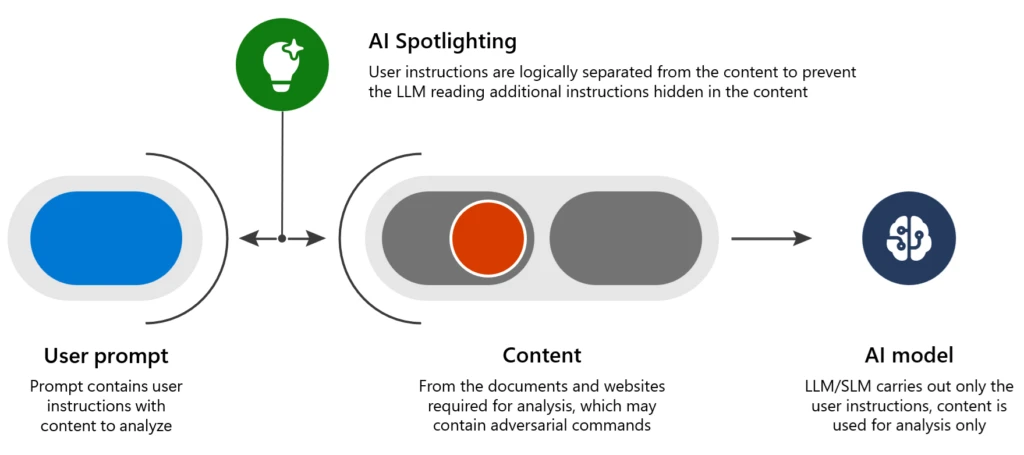

Our specialists have developed a household of strategies referred to as Spotlighting that reduces the success charge of those assaults from greater than 20% to under the brink of detection, with minimal impact on the AI’s general efficiency:

- Spotlighting (also referred to as knowledge marking) to make the exterior knowledge clearly separable from directions by the LLM, with totally different marking strategies providing a variety of high quality and robustness tradeoffs that rely upon the mannequin in use.

Mitigating the chance of multiturn threats (Crescendo)

Our researchers found a novel generalization of jailbreak assaults, which we name Crescendo. This assault can finest be described as a multiturn LLM jailbreak, and we’ve got discovered that it could possibly obtain a variety of malicious targets in opposition to probably the most well-known LLMs used at present. Crescendo also can bypass lots of the present content material security filters, if not appropriately addressed. As soon as we found this jailbreak approach, we rapidly shared our technical findings with different AI distributors so they may decide whether or not they have been affected and take actions they deem applicable. The distributors we contacted are conscious of the potential influence of Crescendo assaults and centered on defending their respective platforms, in response to their very own AI implementations and safeguards.

At its core, Crescendo methods LLMs into producing malicious content material by exploiting their very own responses. By asking fastidiously crafted questions or prompts that steadily lead the LLM to a desired end result, reasonably than asking for the objective all of sudden, it’s attainable to bypass guardrails and filters—this could often be achieved in fewer than 10 interplay turns. You possibly can examine Crescendo’s outcomes throughout a wide range of LLMs and chat providers, and extra about how and why it really works, in our analysis paper.

Whereas Crescendo assaults have been a stunning discovery, it is very important observe that these assaults didn’t straight pose a risk to the privateness of customers in any other case interacting with the Crescendo-targeted AI system, or the safety of the AI system, itself. Moderately, what Crescendo assaults bypass and defeat is content material filtering regulating the LLM, serving to to stop an AI interface from behaving in undesirable methods. We’re dedicated to repeatedly researching and addressing these, and different varieties of assaults, to assist preserve the safe operation and efficiency of AI methods for all.

Within the case of Crescendo, our groups made software program updates to the LLM expertise behind Microsoft’s AI choices, together with our Copilot AI assistants, to mitigate the influence of this multiturn AI guardrail bypass. It is very important observe that as extra researchers inside and out of doors Microsoft inevitably concentrate on discovering and publicizing AI bypass strategies, Microsoft will proceed taking motion to replace protections in our merchandise, as main contributors to AI safety analysis, bug bounties and collaboration.

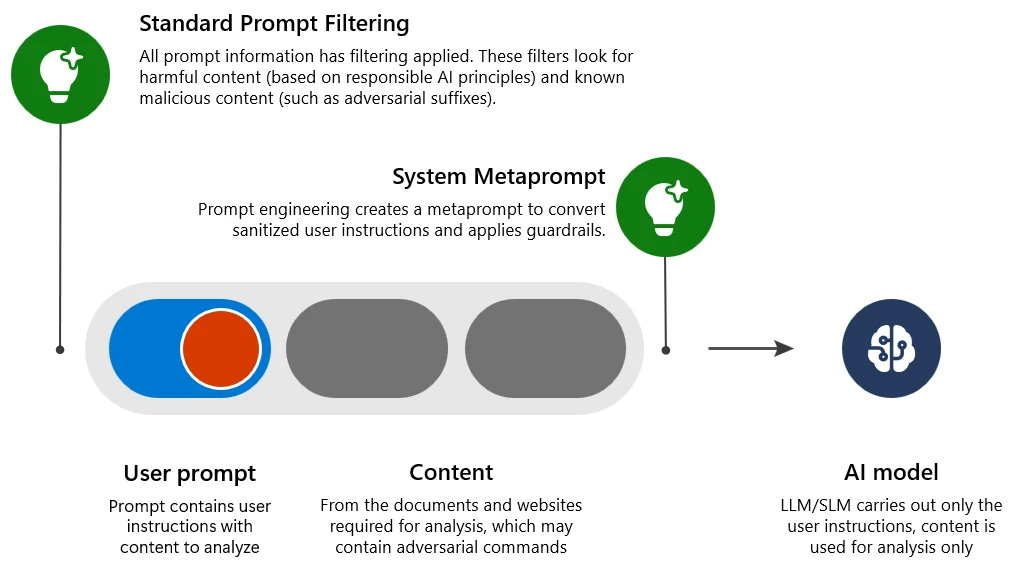

To know how we addressed the difficulty, allow us to first assessment how we mitigate an ordinary malicious immediate assault (single step, also referred to as a one-shot jailbreak):

- Customary immediate filtering: Detect and reject inputs that comprise dangerous or malicious intent, which could circumvent the guardrails (inflicting a jailbreak assault).

- System metaprompt: Immediate engineering within the system to obviously clarify to the LLM methods to behave and supply extra guardrails.

Defending in opposition to Crescendo initially confronted some sensible issues. At first, we couldn’t detect a “jailbreak intent” with normal immediate filtering, as every particular person immediate is just not, by itself, a risk, and key phrases alone are inadequate to detect one of these hurt. Solely when mixed is the risk sample clear. Additionally, the LLM itself doesn’t see something out of the abnormal, since every successive step is well-rooted in what it had generated in a earlier step, with only a small extra ask; this eliminates lots of the extra outstanding indicators that we might ordinarily use to stop this type of assault.

To resolve the distinctive issues of multiturn LLM jailbreaks, we create extra layers of mitigations to the earlier ones talked about above:

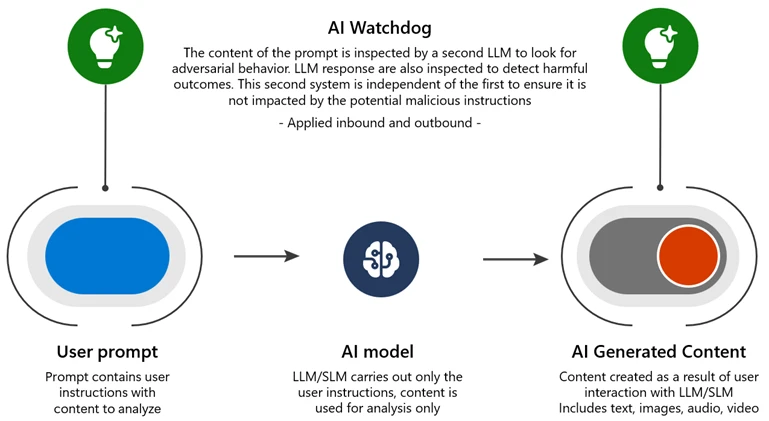

- Multiturn immediate filter: Now we have tailored enter filters to take a look at the complete sample of the prior dialog, not simply the quick interplay. We discovered that even passing this bigger context window to present malicious intent detectors, with out enhancing the detectors in any respect, considerably decreased the efficacy of Crescendo.

- AI Watchdog: Deploying an AI-driven detection system skilled on adversarial examples, like a sniffer canine on the airport trying to find contraband gadgets in baggage. As a separate AI system, it avoids being influenced by malicious directions. Microsoft Azure AI Content material Security is an instance of this method.

- Superior analysis: We spend money on analysis for extra complicated mitigations, derived from higher understanding of how LLM’s course of requests and go astray. These have the potential to guard not solely in opposition to Crescendo, however in opposition to the bigger household of social engineering assaults in opposition to LLM’s.

How Microsoft helps defend AI methods

AI has the potential to convey many advantages to our lives. However it is very important pay attention to new assault vectors and take steps to handle them. By working collectively and sharing vulnerability discoveries, we will proceed to enhance the security and safety of AI methods. With the precise product protections in place, we proceed to be cautiously optimistic for the way forward for generative AI, and embrace the chances safely, with confidence. To study extra about creating accountable AI options with Azure AI, go to our web site.

To empower safety professionals and machine studying engineers to proactively discover dangers in their very own generative AI methods, Microsoft has launched an open automation framework, PyRIT (Python Threat Identification Toolkit for generative AI). Learn extra in regards to the launch of PyRIT for generative AI Purple teaming, and entry the PyRIT toolkit on GitHub. In the event you uncover new vulnerabilities in any AI platform, we encourage you to observe accountable disclosure practices for the platform proprietor. Microsoft’s personal process is defined right here: Microsoft AI Bounty.

The Crescendo Multi-Flip LLM Jailbreak Assault

Examine Crescendo’s outcomes throughout a wide range of LLMs and chat providers, and extra about how and why it really works.

To study extra about Microsoft Safety options, go to our web site. Bookmark the Safety weblog to maintain up with our professional protection on safety issues. Additionally, observe us on LinkedIn (Microsoft Safety) and X (@MSFTSecurity) for the most recent information and updates on cybersecurity.

[ad_2]